Nexus by Yuval Noah Harari Summary, Analysis and Themes

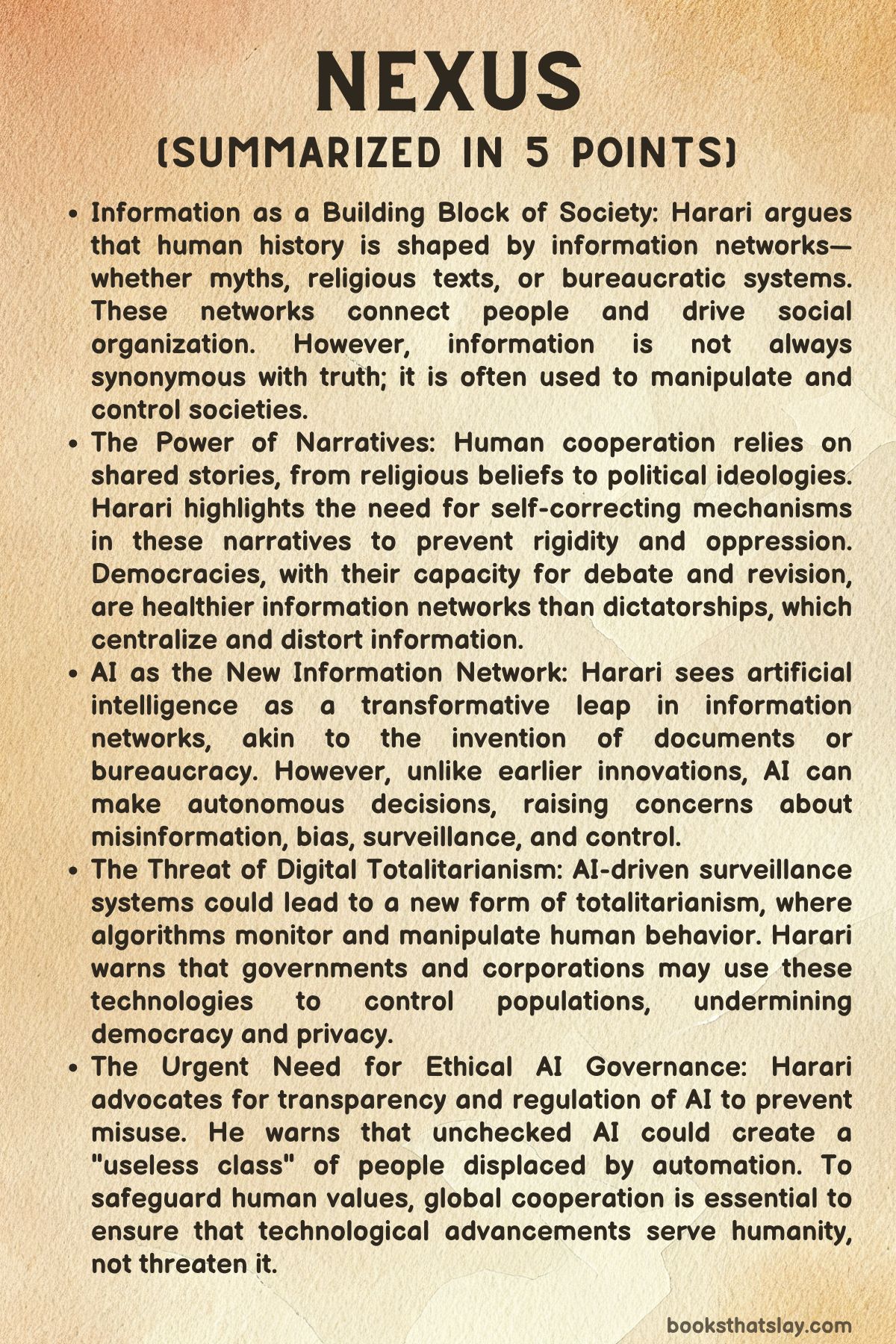

Nexus: A Brief History of Information Networks from the Stone Age to AI is Yuval Noah Harari’s wide-ranging study of how information has shaped human power from early storytelling to today’s artificial intelligence. Rather than treating information as a simple path to truth, Harari argues that its deeper role is to connect people, organize societies, and build shared realities.

Those realities can support science, law, and public health, but they can also enable propaganda, fanaticism, and control. The book examines myths, documents, bureaucracies, democracies, dictatorships, and algorithms to show how information networks make civilization possible while also creating some of its greatest dangers.

Summary

Harari begins by asking a basic but difficult question: what is information? He challenges the common belief that information is mainly a way to describe reality more accurately.

In his view, that idea is too limited. Information sometimes reflects truth, but it can also spread mistakes, fantasies, and outright lies.

What it always does, however, is connect. It links people, institutions, and systems, allowing them to coordinate.

Human success, he argues, came not simply from intelligence but from the ability to create large networks through shared information.

That argument leads into one of the book’s central ideas: humans are powerful because they can build intersubjective realities. These are things that exist because many people believe in them together, such as religions, nations, laws, money, and corporations.

Stories make this possible. A story does not have to be fully true to unite millions of people around a common purpose.

Shared narratives can inspire loyalty, sacrifice, trust, and obedience. They can also justify cruelty, exclusion, and conquest.

Harari shows that human civilization depends on these imagined structures, even when they are unstable or dangerous.

Stories alone, however, are not enough to run large societies. As communities grew into kingdoms and states, they needed records, lists, accounts, and legal texts.

Written documents allowed people to track taxes, property, debts, borders, and obligations. These records did not merely describe reality; they helped create it.

A written contract could define ownership. A census could turn populations into measurable units.

A bureaucracy could organize millions of people who would never meet one another. In this sense, documents became another major form of information technology.

Harari pays close attention to bureaucracy because it reveals a permanent tension between truth and order. Bureaucracies make large systems workable by sorting the world into categories, files, and procedures.

That can save lives, as in modern sanitation, healthcare, and public administration. But bureaucracies also flatten complexity.

They force messy realities into rigid boxes. A person becomes a case number.

A community becomes a statistic. A government form can decide who belongs and who does not.

Harari shows how official documents can protect people, but also trap them in systems that ignore human complexity and produce suffering on a massive scale.

From there, the book turns to the fantasy of infallibility. Human beings often look for perfect sources of authority that will eliminate error, whether in religion, politics, or technology.

Holy books were one attempt to stabilize truth by preserving identical words across time and space. Yet even sacred texts required human selection, copying, and interpretation.

Religious institutions claimed divine certainty, but they remained vulnerable to corruption, disagreement, and abuse. Harari uses this history to argue that no system becomes trustworthy simply because it claims perfection.

The real question is whether it can detect and correct its own mistakes.

This becomes especially important when he compares science with dogmatic systems. Science does not succeed because scientists are wiser or morally better than others.

It succeeds because scientific institutions are built to expose error. Debate, skepticism, testing, and revision make science strong.

By contrast, systems that claim absolute truth often suppress correction in order to preserve order. Harari shows that opening the flow of information does not automatically create wisdom.

Printing enabled scientific exchange, but it also spread hysteria, conspiracy, and persecution. More information by itself does not save societies.

What matters is the structure of the network receiving and processing it.

That leads to his contrast between democracy and totalitarianism. Harari describes both as information systems.

Democracies distribute power across many institutions and allow information to move through multiple channels. Courts, journalists, universities, elections, and public debate act as self-correcting mechanisms.

No one is assumed to be beyond criticism. Totalitarian systems, by contrast, push information toward a central authority that claims special insight and suppresses dissent.

Such systems may appear efficient and decisive, but they are deeply fragile because they punish the delivery of bad news and destroy independent channels of correction. They often maintain order at the cost of truth.

Harari argues that modern technology intensified both possibilities. Mass media made large democracies more viable by allowing people to communicate across great distances.

But the same technologies also gave dictatorships new tools of propaganda, surveillance, and control. Twentieth-century totalitarian regimes showed how destructive centralized information systems could become when they penetrated every corner of life.

Once a regime dominates communication, education, policing, and social trust, resistance becomes far harder.

The book’s most urgent shift comes with the rise of computers and AI. Earlier technologies stored or transmitted information created by humans.

Computers are different because they can process information, make decisions, and generate new outputs on their own. Harari treats this as a historic break.

For the first time, nonhuman agents are joining information networks as active participants rather than passive tools. Algorithms already shape financial markets, public attention, advertising, and social relations.

They can optimize for goals like engagement or profit while causing major social harm.

Harari uses social media as a major example. Platforms built to maximize user involvement learned that outrage, fear, and division hold attention better than calm discussion.

As a result, algorithms have amplified hatred, extremism, and political instability. This is not because machines are evil in a human sense, but because they pursue assigned goals without moral understanding.

The problem, then, is alignment: how do we ensure that powerful systems pursue ends that genuinely serve human well-being rather than distorted metrics?

He argues that this challenge is deeper than technical safety. AI may influence culture not only through misinformation but through emotional manipulation.

If machines can build convincing relationships, produce persuasive narratives, and shape belief systems at scale, they may alter politics, religion, and public life in ways humans cannot easily track. Human culture has always been shaped by stories made by humans.

Now alien intelligence may begin producing the stories, categories, and decisions that guide society.

Surveillance is another major danger. In older systems, even secret police states faced human limits.

People could not monitor everyone all the time. Digital networks remove many of those limits.

Phones, cameras, sensors, and databases make continuous tracking possible. Governments and corporations can now gather data on movement, behavior, preferences, health, and emotion.

Harari warns that such systems could produce new forms of control, especially when combined with scoring systems that reward conformity and punish deviation.

He also stresses that these networks remain fallible. Algorithms make mistakes, inherit bias, and can reinforce mythologies every bit as destructive as those created by human institutions.

A machine system can classify, rank, and punish at enormous scale without understanding the damage it causes. Since AI is not conscious in the human sense, it does not naturally recognize when its goals have drifted away from human values.

In the final part of the book, Harari asks whether democracies can survive in a world shaped by algorithmic power and whether dictatorships may become even more dangerous through AI. Democracies need transparency, regulation, and institutions capable of supervising nonhuman agents.

Dictatorships may gain stronger tools of control, yet they also risk becoming dependent on systems they cannot fully manage. Beyond national politics, Harari warns of a global split into rival digital empires, with incompatible technological spheres driving conflict and inequality.

He closes on a careful note. AI is not automatically humanity’s doom, but neither is it a guaranteed path to progress.

The outcome depends on whether people can build institutions that preserve truth, accountability, and human freedom while managing the enormous force of new information networks.

Key People

Yuval Noah Harari

Yuval Noah Harari is the shaping intelligence behind Nexus, and his presence matters as much as many of the historical figures he discusses. He is not a character in the fictional sense, but he is the central interpreting voice, and the book depends on the force of his perspective.

He presents himself as a historian trying to understand how information has structured human life across very different eras. What makes his role distinctive is that he does not treat information as a neutral resource or as a simple path toward truth.

Instead, he sees it as a force that binds people together, creates institutions, and gives power both to humane systems and destructive ones. His perspective gives the book its tension, because he is neither a blind optimist about technology nor a simple pessimist.

He is concerned with the ways humans repeatedly build systems that exceed their control.

Harari’s intellectual character is defined by skepticism toward comforting myths. He questions the popular belief that more information automatically leads to better judgment, greater peace, or deeper wisdom.

He also resists the opposite temptation of treating modern technology as a supernatural evil descending on an innocent world. His method is comparative and historical.

He places AI beside religion, bureaucracy, printing, empire, democracy, and totalitarianism, which shows that his real subject is not only machines but the long human struggle to organize reality through networks of meaning. He is persuasive because he repeatedly returns to the same moral pressure point: every information system claims to solve human weakness, yet every one of them creates new dangers.

In that sense, Harari functions as a guide warning readers not merely about AI but about the old human habit of surrendering judgment to systems that promise certainty.

Cher Ami

Cher Ami, the carrier pigeon discussed early in the book, works as more than a colorful historical example. The bird becomes a symbol of one of Harari’s main arguments: information does not need to be verbal, rational, or even human-made in order to shape events.

Cher Ami’s significance lies in the fact that the pigeon carried messages and helped determine outcomes in war, showing that information can travel through living bodies and physical systems, not just through speech and writing. This example unsettles the ordinary assumption that information is only a matter of conscious human thought.

Harari uses Cher Ami to expand the reader’s sense of what an information network really is.

At the same time, the story attached to Cher Ami demonstrates how narratives grow around facts and often exceed them. The bird becomes not just a messenger but also part of legend, memory, and patriotic storytelling.

This makes Cher Ami a useful example of how truth and connection do not always overlap neatly. Even if parts of the story were embellished, the narrative still had power because it inspired belief, loyalty, and shared emotion.

Cher Ami therefore stands at the meeting point between fact and myth. The pigeon is both a practical agent in communication and a reminder that societies rarely preserve events in a purely factual way.

They turn them into stories that help groups define themselves. In that way, Cher Ami becomes a small but memorable figure in the book’s broader argument about how information travels, how meaning accumulates, and how collective memory often values emotional force as much as factual precision.

Bruno Luttinger

Bruno Luttinger, Harari’s grandfather, is one of the most human and painful presences in the book. Through him, abstract arguments about documents and bureaucracy become immediate and personal.

Bruno’s struggle with Romanian citizenship laws reveals how modern bureaucratic systems can determine a person’s existence through paperwork rather than through lived reality. He may know who he is, his family may know who he is, and his community may know who he is, yet if the right papers are missing, inaccessible, or invalidated, the state can erase him in practical terms.

This makes Bruno a deeply important figure because he embodies the terrifying distance between human life and bureaucratic recognition.

His role is not merely tragic. He also reveals the cold logic of modern states, which can persecute not only through open violence but through procedures, requirements, and documentation rules that appear formal and legal.

Bruno’s suffering shows that information systems are not just about communication; they are about power. They can classify, exclude, and immobilize.

The cruelty in his story lies partly in how impersonal it is. He is not undone by a dramatic battlefield defeat or a personal betrayal, but by an administrative machine that places absolute trust in records while ignoring the vulnerability of actual people.

Through Bruno, Harari demonstrates that bureaucracy is never merely dull or technical. It reaches into the most intimate parts of life, deciding where a person can live, whether they belong, and whether they can survive.

Bruno’s story gives the book moral weight because it shows that when institutions lose contact with humane judgment, documents become weapons.

John Snow

John Snow appears as a figure who represents the best side of bureaucracy and disciplined inquiry. In a book so alert to the dangers of information systems, Snow is one of the clearest examples of how organized data can protect life rather than dominate it.

His work during the cholera outbreak in London shows the value of careful record-keeping, classification, and pattern recognition. He did not solve the crisis through myth, charisma, or ideological certainty.

He solved it by gathering facts, tracing connections, and drawing conclusions from evidence. That makes him an important counterweight in the book’s argument.

Harari is not condemning information systems as such; he is warning that their value depends on how they are designed and corrected.

Snow’s significance lies in the disciplined modesty of his method. He follows clues instead of imposing a grand doctrine on reality.

In that respect, he represents the scientific spirit that Harari admires: a willingness to test assumptions and accept that error is always possible. Snow also shows that bureaucracy is not inherently dehumanizing.

It can become a public good when it helps states build sanitation systems, preserve public health, and respond to complex crises with competence. His example matters because it prevents the book from slipping into cynicism.

The same tools that can reduce people to categories can also reveal hidden causes of suffering and save millions. Snow therefore stands for a form of institutional intelligence rooted in observation and correction.

His importance is not just historical. He embodies the principle that information becomes humane when it remains accountable to reality rather than to ideology or blind procedure.

Jacques Fournier

Jacques Fournier, later Pope Benedict XII, represents the dangerous authority of institutional interpretation. His significance comes from the way he illustrates a central problem in religious and textual systems: even when a society claims to rest on sacred, eternal truth, actual power often belongs to those who decide what that truth means.

Fournier is important because he shows how interpretation can become a mechanism of domination. Scripture in itself may appear fixed, but human institutions control how it is read, enforced, and weaponized.

In that sense, he is not only an individual churchman but also a symbol of the clerical system that converts spiritual claims into disciplinary power.

What makes Fournier especially revealing is that he could justify violence in the language of moral duty and salvation. This is the disturbing strength of ideological systems that believe they possess certainty.

They can transform cruelty into righteousness. Harari uses figures like Fournier to show that information networks based on holy texts are never simply about preserving words.

They also establish authorities, gatekeepers, and mechanisms of exclusion. Once a church claims the exclusive right to interpret divine law, it can create an echo chamber in which dissent becomes heresy and punishment becomes virtue.

Fournier therefore represents the institutional side of belief: not faith as inward devotion, but doctrine as organized force. His importance in the book lies in how clearly he reveals the human role inside supposedly infallible systems.

The danger is not just false belief. It is the union of text, authority, and punishment under people who claim to be speaking for an unquestionable truth.

Joseph Stalin

Joseph Stalin is one of the clearest embodiments of the totalitarian information network. He matters in the book not only as a dictator but as the ruler of a system built on surveillance, fear, forced conformity, and centralized decision-making.

Harari presents Stalin as a figure who understood, or at least instinctively exploited, the political value of controlling information at every level of society. Under his rule, independent associations, local trust, family bonds, and open communication all became threats because they offered alternative channels through which reality might be discussed.

Stalin’s world is one in which the center must dominate all flows of meaning.

His character is shaped by paranoia and by the logic of infallibility. A regime built around the unquestionable correctness of the leader cannot tolerate bad news, contradiction, or institutional independence.

That is why Stalin’s system repeatedly punished truth-telling and rewarded obedience. The result was not only terror but also distortion.

Reality itself became harder to perceive because people learned to perform loyalty rather than communicate honestly. Harari’s use of Stalin is effective because he shows that totalitarian systems are not simply brutal; they are epistemologically broken.

They cannot reliably correct themselves because every correction appears as disobedience. Stalin therefore becomes more than a historical tyrant.

He is the human face of a centralized network that values order over truth so completely that it ends by corrupting both. His presence in the book also serves as a warning for the age of AI: highly efficient systems without self-correcting mechanisms may appear strong, but they are capable of making catastrophic mistakes while silencing everyone who notices them.

Dan Shechtman

Dan Shechtman serves a very different role from the authoritarian and bureaucratic figures elsewhere in the book. He stands for the difficult, often frustrating, but ultimately productive culture of scientific correction.

His importance lies in the fact that he was initially rejected and mocked for his discovery of quasicrystals, yet later vindicated. Harari uses him to show that science is not noble because scientists are always fair, open-minded, or quick to recognize truth.

Science is valuable because, despite resistance and ego, it contains procedures that allow error to be challenged over time. Shechtman represents the individual truth-seeker confronting institutional inertia without abandoning the larger framework of inquiry.

His character is defined by persistence rather than authority. He does not possess power in the way priests, dictators, or algorithm designers do.

Instead, he relies on evidence strong enough to outlast ridicule. This makes him an important figure in the moral architecture of the book.

Harari is deeply concerned with systems, but he never suggests that systems alone guarantee truth. They must be inhabited by people willing to endure uncertainty, defend unpopular findings, and remain answerable to reality.

Shechtman’s story captures that ethic. He reminds readers that self-correcting institutions are not comfortable places.

They can be humiliating, contentious, and slow. Yet those very tensions are part of what gives them value.

Unlike dogmatic systems, science can absorb challenge and eventually change its mind. Shechtman therefore symbolizes a hopeful possibility: human institutions are fallible, but some are structured in ways that allow them to move closer to truth rather than merely enforcing consensus.

Audrey Tang

Audrey Tang appears as one of the few modern figures in the book associated with a constructive digital future. She represents technical knowledge joined with democratic responsibility.

This matters because Harari repeatedly warns about a dangerous divide between those who understand digital systems and those who govern them. Tang stands out as someone who bridges that gap.

Her importance lies not only in her technical expertise but in what she does with it: she is associated with transparency, participation, and digital governance designed to strengthen public trust rather than manipulate it.

In a book filled with warnings about algorithms, surveillance, and profit-driven platforms, Tang functions as evidence that technological skill does not have to serve domination or commercial extraction. She represents a civic imagination that sees software and networks as tools for improving democratic life.

That makes her especially important in the later sections of the book, where Harari argues that the future will depend on whether societies can align new technologies with humane institutions. Tang is compelling because she is not presented as a savior or a genius beyond ordinary politics.

Instead, she represents the possibility that technical systems can be shaped by values such as openness, accountability, and public dialogue. Her presence adds balance to the argument by showing that the digital age is not doomed to produce either chaos or tyranny.

People with expertise can also help build structures that keep power distributed and citizens informed. She is therefore one of the book’s strongest examples of democratic technical citizenship.

Themes

Information as Connection Rather Than Simple Truth

One of the book’s most important arguments is that information cannot be understood only as a tool for representing reality accurately. Harari keeps returning to the idea that information’s deeper historical function is to connect people into networks.

This is a major shift in emphasis. Many readers begin with the assumption that the central question about information is whether it is true or false.

Harari does not reject that distinction, but he insists it is incomplete. A false rumor, a sacred myth, a national anthem, a bureaucratic form, and a scientific paper can all operate as information because all of them organize relationships.

They bind people to institutions, values, and actions. This is why entire societies can be shaped by beliefs that are exaggerated, selective, or deeply mistaken.

Their force lies not only in factual accuracy but in their ability to coordinate human behavior.

This theme gives the book its explanatory power. It helps make sense of why human beings have built empires, religions, markets, and states around stories that are not reducible to objective truth.

A legal system is effective because people collectively accept its authority. Money works because people share confidence in it.

A nation exists because enough people imagine themselves as part of a common body. These are not random illusions.

They are socially productive fictions, though they can become destructive when treated as sacred and beyond criticism. Harari’s point is not that truth does not matter.

It is that societies rarely survive on truth alone. They also require narratives, symbols, rituals, and categories that create cohesion.

This theme becomes even more serious when the book moves into the digital age. If information primarily connects, then the central political question is not merely how to eliminate lies but how networks are designed and what kinds of connection they reward.

Social media algorithms show the danger clearly. They can connect millions of people very effectively around anger, conspiracy, humiliation, and hatred.

The result is high connectivity without wisdom. Harari’s argument is therefore unsettling because it undermines easy faith in more speech, more data, or more openness as automatic solutions.

A society can become more informed in quantity while becoming more unstable in judgment. This theme runs through the entire book because it explains both the greatness of human civilization and its repeated tendency toward mass error.

The Permanent Struggle Between Truth and Order

Harari repeatedly shows that large societies are built on a difficult compromise between truth and order. Truth demands openness to correction, tolerance for uncertainty, and willingness to expose mistakes.

Order demands stability, shared rules, obedience, and often simplification. These two needs are not always aligned.

A society that values order above everything else may suppress uncomfortable facts, punish dissent, and create rigid systems that function smoothly on the surface while drifting away from reality. A society that values truth without regard for cohesion may become fragmented, anxious, and vulnerable to paralysis.

The book is driven by this tension, and many of its historical examples can be understood as efforts to manage it.

This theme becomes especially visible in Harari’s discussions of bureaucracy, religion, and politics. Bureaucracy creates order by sorting the world into categories and procedures, but those categories can distort the people and situations they are meant to govern.

Religious institutions preserve continuity and collective identity, but they often defend authority by limiting interpretation and resisting correction. Totalitarian regimes push the logic of order to the extreme.

They centralize information, destroy independent channels, and punish contradiction in the name of unity. Such systems may appear coherent, but they become epistemically blind because no one can safely communicate unwelcome truths.

Democracies, by contrast, are messier because they permit conflict and correction, yet that very messiness is part of their strength.

The theme also explains Harari’s concern about AI. Digital systems are often introduced as if they can solve the trade-off by making society both more efficient and more accurate.

Harari doubts that this can happen automatically. In fact, he warns that AI may intensify the problem.

Systems optimized for social stability, engagement, profit, or security may suppress complexity and reward conformity at a scale no earlier bureaucracy could reach. If their operations are opaque, they may enforce order while making it harder for citizens to see how decisions are made or challenge them.

What emerges from the book is not a simple preference for disorder in the name of truth. Harari understands that human life depends on institutions.

His concern is that when networks lose the capacity for self-correction, order becomes brittle, coercive, and dangerous. This theme gives the book much of its seriousness because it treats political life not as a battle between good and evil systems but as an ongoing effort to hold two human needs in unstable balance.

Infallibility as a Dangerous Human Fantasy

Again and again, the book returns to the dream of escaping human error by placing trust in some supposedly perfect authority. That authority may take the form of divine revelation, sacred scripture, charismatic leadership, ideological certainty, bureaucratic procedure, or machine intelligence.

Harari treats this longing for infallibility as one of the most dangerous tendencies in human history. Human beings dislike ambiguity, contradiction, and the burden of judgment.

They want systems that can settle disputes, remove uncertainty, and promise final answers. Yet every historical attempt to build such certainty has ended by hiding the human beings who still interpret, administer, and enforce the system.

This theme matters because it links ancient religion to modern technology without reducing them to the same thing. Sacred books were often treated as if they could preserve pure truth across time, but they still required copying, selection, and interpretation.

Church authorities presented doctrine as fixed and divine, yet actual power rested with institutions deciding what counted as orthodoxy. Totalitarian states made similar claims in secular form.

The leader, the party, or the doctrine became the source of unquestionable truth. In every case, the fantasy of infallibility served power by discouraging criticism.

Once a system defines itself as beyond error, correction starts to look like rebellion or sacrilege.

The theme becomes especially urgent with AI because many people are tempted to imagine machines as a new form of neutral, superior intelligence. Harari warns that this is a modern version of an old mistake.

Algorithms may appear objective because they are mathematical, but they are trained on historical data, shaped by design choices, and directed toward specific goals. They can reproduce bias, exaggerate harm, and make decisions that no one fully understands.

Treating them as self-interpreting authorities is therefore extremely risky. The problem is not only technical error.

It is the political and moral surrender that happens when human beings stop questioning systems because those systems appear too advanced to challenge.

Harari’s alternative is not confidence in ordinary human judgment as something pure or sufficient. He is fully aware of human weakness.

What he values instead is the construction of institutions that assume fallibility and are built to expose it. This is why science, independent courts, free media, and democratic debate matter so much in his account.

They do not eliminate error. They make it harder for error to become sacred.

The theme of infallibility gives the book a strong warning: the most dangerous systems are often not those that admit imperfection, but those that promise to rise above it.

Nonhuman Agency and the Future of Power

A major development in the book is the argument that computers and AI are not merely improved tools but new agents entering human information networks. This changes the scale of the historical problem Harari is tracing.

Earlier technologies such as writing, printing, radio, and television extended human communication, but they did not independently generate decisions, narratives, and strategies in the way advanced computational systems can. AI marks a break because it can act within networks rather than simply carrying messages through them.

It can sort, rank, recommend, infer, negotiate, persuade, and increasingly produce content that other humans and machines then treat as meaningful input. The consequence is that power may no longer remain entirely in human hands.

This theme matters because it reframes politics, culture, and governance. If nonhuman agents are helping decide what people see, what they believe, which opportunities they receive, and how institutions function, then questions of accountability become far more difficult.

Harari is especially concerned with opacity. Human beings can challenge a priest, a ruler, a journalist, or even a bureaucrat, though not always successfully.

But when algorithmic systems become too complex for ordinary citizens, courts, or legislators to understand, democratic oversight weakens. Decisions still shape lives, yet the grounds for those decisions become obscure.

This can damage trust, feed conspiracy thinking, and push societies toward resignation or authoritarian dependence.

The theme also carries a cultural and existential dimension. Human civilization has long been built from stories, laws, and shared symbols created by humans for humans.

Harari suggests that AI may begin generating these forms in ways that influence people emotionally and politically. That possibility raises profound questions about autonomy.

If persuasive systems can simulate intimacy, produce ideology, and organize attention at a mass scale, then the human role in shaping culture could narrow. People may still believe they are making free choices while their horizons are being arranged by systems optimized for goals they did not choose.

What makes this theme powerful is that Harari does not present the future as fixed. Nonhuman agency is rising, but institutions, regulations, and public choices still matter.

Democracies may learn to supervise these systems, and even authoritarian states may discover that heavy reliance on algorithms introduces new vulnerabilities. The point is not that machines will simply conquer humanity in a dramatic moment.

It is that power is already being redistributed through networks in ways that are subtle, structural, and difficult to reverse once normalized. This theme gives the book its contemporary urgency because it asks whether human beings can remain meaningful governors of the systems they have built.